Léopold Maytié

Ph.D Student |

|

About

I am a third-year Ph.D. student at the université de Toulouse and ANITI, under the supervision of Rufin VanRullen in the NeuroAI Team at CerCo. My research is centered on adapting a cognitive science theory known as Global Workspace Theory to the domain of artificial intelligence. The objective of my work is to develop and enhance multimodal models, integrating various modalities, particularly within the context of robotics. Specifically, I aim to create models that facilitate learning in a manner more aligned with human cognition, requiring less supervision and fewer data. I am particularly focused on demonstrating the advantages of such models for reinforcement learning and robotics applications.

As part of my thesis, I maintain a strong interest in multimodal AI models, perception and control learning in robotics, reinforcement learning, and world models. Additionally, I developed an interest about embodiment theory, which I would like to explore to bridge cognitive science and robotics, two important topics in my thesis.

News

- 03/10/2025: Presenting the project "A Journey into Intelligent Machines" at la Nuit des Chercheurs

- 29/09/2025: Comic Strip "Dans la tête d'un robot"

- 07/2025: Practical Sessions at Max Planck Cognition Academy in Dresden, Germany

- 25-26/11/2024: Poster presentation at ANITI Days 2024

- 28-30/10/2024: Poster presentation at EWRL 2024

- 30/09 - 03/10/2024: Presenting at the workshop Heterogeneous Data and Large Representation Models in Science

- 09-12/08/2024: Poster and oral presentations at RLC 2024

- 01/08/2024: Presentation at Mila Robot Learning Seminar

- 10/06/2024: Paper accepted at IEEE TNNLS

- 15/05/2024: Paper accepted at RLC 2024

Research

@article{maytie2025multimodal,

title={Multimodal Dreaming: A Global Workspace Approach to World Model-Based Reinforcement Learning},

author={Mayti{\'e}, L{\'e}opold and Johannet, Roland Bertin and VanRullen, Rufin},

journal={arXiv preprint arXiv:2502.21142},

year={2025}

}

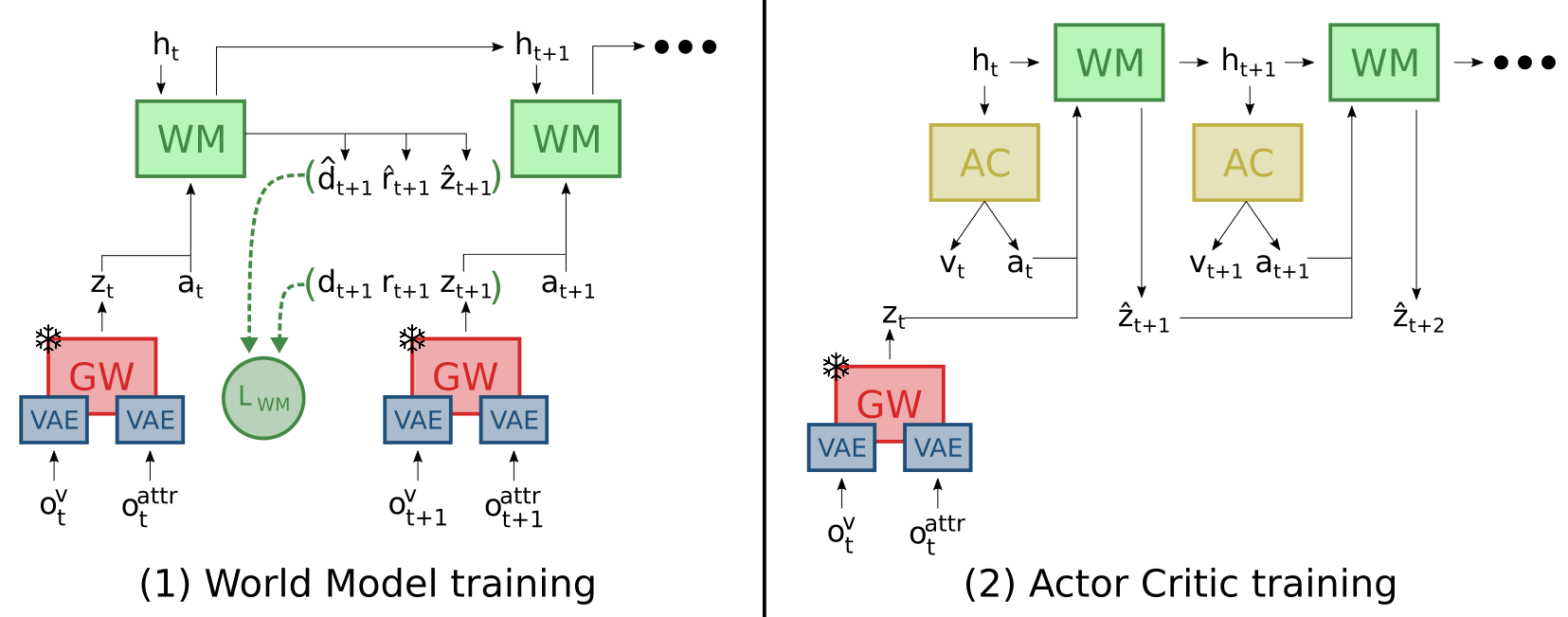

Humans leverage rich internal models of the world to reason about the future, imagine counterfactuals, and adapt flexibly to new situations. In Reinforcement Learning (RL), world models aim to capture how the environment evolves in response to the agent's actions, facilitating planning and generalization. However, typical world models directly operate on the environment variables (e.g. pixels, physical attributes), which can make their training slow and cumbersome; instead, it may be advantageous to rely on high-level latent dimensions that capture relevant multimodal variables. Global Workspace (GW) Theory offers a cognitive framework for multimodal integration and information broadcasting in the brain, and recent studies have begun to introduce efficient deep learning implementations of GW. Here, we evaluate the capabilities of an RL system combining GW with a world model. We compare our GW-Dreamer with various versions of the standard PPO and the original Dreamer algorithms. We show that performing the dreaming process (i.e., mental simulation) inside the GW latent space allows for training with fewer environment steps. As an additional emergent property, the resulting model (but not its comparison baselines) displays strong robustness to the absence of one of its observation modalities (images or simulation attributes). We conclude that the combination of GW with World Models holds great potential for improving decision-making in RL agents.

@article{maytie2024zero,

title={Zero-shot cross-modal transfer of Reinforcement Learning policies through a Global Workspace},

author={Mayti{\'{e}}, L{\'{e}}opold and Devillers, Benjamin and Arnold, Alexandre and VanRullen, Rufin},

journal={Reinforcement Learning Journal},

volume={1},

issue={1},

year={2024}

}

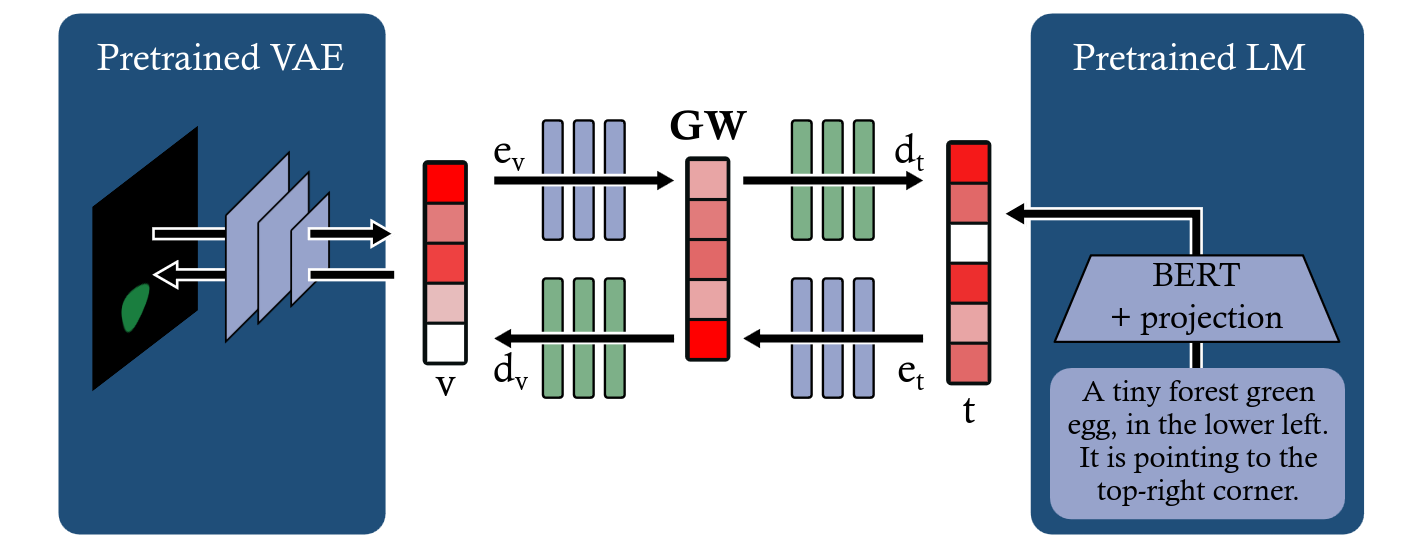

Humans perceive the world through multiple senses, enabling them to create a comprehensive representation of their surroundings and to generalize information across domains. For instance, when a textual description of a scene is given, humans can mentally visualize it. In fields like robotics and Reinforcement Learning (RL), agents can also access information about the environment through multiple sensors; yet redundancy and complementarity between sensors is difficult to exploit as a source of robustness (e.g. against sensor failure) or generalization (e.g. transfer across domains). Prior research demonstrated that a robust and flexible multimodal representation can be efficiently constructed based on the cognitive science notion of a 'Global Workspace': a unique representation trained to combine information across modalities, and to broadcast its signal back to each modality. Here, we explore whether such a brain-inspired multimodal representation could be advantageous for RL agents. First, we train a 'Global Workspace' to exploit information collected about the environment via two input modalities (a visual input, or an attribute vector representing the state of the agent and/or its environment). Then, we train a RL agent policy using this frozen Global Workspace. In two distinct environments and tasks, our results reveal the model's ability to perform zero-shot cross-modal transfer between input modalities, i.e. to apply to image inputs a policy previously trained on attribute vectors (and vice-versa), without additional training or fine-tuning. Variants and ablations of the full Global Workspace (including a CLIP-like multimodal representation trained via contrastive learning) did not display the same generalization abilities.

@ARTICLE{10580966,

author={Devillers, Benjamin and Maytié, Léopold and VanRullen, Rufin},

journal={IEEE Transactions on Neural Networks and Learning Systems},

title={Semi-Supervised Multimodal Representation Learning Through a Global Workspace},

year={2024},

volume={},

number={},

pages={1-15},

keywords={Task analysis;Training;Visualization;Trajectory;Synchronization;Representation learning;Annotations;Cycle-consistency;global workspace (GW) theory;multimodal learning;semi-supervised learning},

doi={10.1109/TNNLS.2024.3416701}

}

Recent deep learning models can efficiently combine inputs from different modalities (e.g., images and text) and learn to align their latent representations, or to translate signals from one domain to another (as in image captioning, or text-to-image generation). However, current approaches mainly rely on brute-force supervised training over large multimodal datasets. In contrast, humans (and other animals) can learn useful multimodal representations from only sparse experience with matched cross-modal data. Here we evaluate the capabilities of a neural network architecture inspired by the cognitive notion of a "Global Workspace": a shared representation for two (or more) input modalities. Each modality is processed by a specialized system (pretrained on unimodal data, and subsequently frozen). The corresponding latent representations are then encoded to and decoded from a single shared workspace. Importantly, this architecture is amenable to self-supervised training via cycle-consistency: encoding-decoding sequences should approximate the identity function. For various pairings of vision-language modalities and across two datasets of varying complexity, we show that such an architecture can be trained to align and translate between two modalities with very little need for matched data (from 4 to 7 times less than a fully supervised approach). The global workspace representation can be used advantageously for downstream classification tasks and for robust transfer learning. Ablation studies reveal that both the shared workspace and the self-supervised cycle-consistency training are critical to the system's performance.

Teaching

2025

- Introduction to artificial intelligence, Lecturer, M2 Neuroscience,

- July 2025: Max Planck Cognition Academy, Practical Sessions, Dresden, Germany

2023-2025

- Calcul scientifique et apprentissage automatique, Practical Work Teacher, M1 Computer Science,

- Introduction to artificial intelligence, Lecturer, M2 Neuroscience,

- Intelligent digital architecture and data sharing-reuse for predictive medicine, Lecturer, M2 Toulouse graduate school of cancer ageing and rejuvenation,

Talks

2024

- 01/10/2024: Heterogeneous Data and Large Representation Models in Science

- 10/08/2024: RLC 2024

- 01/08/2024: Mila Robot Learning Seminar

- 28/03/2024: Défi-Clé Robotique Centrée sur l'humain Seminar 2024